Every hard problem humanity hasn't solved yet is, at its core, the same problem: not enough intelligence has been brought to bear on it for long enough.

Progress is bottlenecked by our ability to accumulate intelligence and operationalize it. So far, that intelligence has been almost entirely human.

The big promise of AI is to move this bottleneck to compute.

In a world where research is autonomous, consensus is formed through the same broad computational loop across disciplines: autonomous agents spend compute to produce a theory and the experiments, proofs, or interventions that support it, and other agents spend compute to verify, replicate, stress-test, and extend those results across varying depths and conceptual neighborhoods.

Of course, the concrete operators differ. In one case, verification may mean checking a proof or formalizing it; in another, reproducing a wet-lab result; in another still, testing whether an explanatory structure continues to hold as one broadens the observational frame. But these are no longer fundamentally separate activities. They become instances of a single higher-level process: validating whether a proposed structure of the world holds where and how it was claimed to hold.

The real difference here is therefore not in the existence of distinct sciences, but in the horizontality of the validating intelligence. A human mathematician who is also a great anthropologist would likely experience contributing to consensus in both fields not as entering two distinct epistemologies, but as selecting the appropriate validation instrument for the structure at hand. Unfortunately, there are not many such humans. But there will increasingly be such agents.

This does not eliminate the philosophical differences across disciplines, but it changes their practical implementation dramatically: results begin to look much more like something that can be systematically surfaced, while consensus takes the shape of a distributed process of verification, replication, and extension. We spend energy to learn something about the world and fit it into the existing puzzle of how we think the world works.

In this new world, economic incentives are in place to map out existing knowledge, put forward a hypothesis, and have the world spend compute forming consensus around it. These incentives are not new; they are a compression of the existing ones. Technological transfer, as we know it, becomes a process that happens in days rather than decades.

The next "Attention Is All You Need" paper will not need ten years to become a profitable company. It will be discovered in a hopefully structured sea of attempts at breaking whatever architectural local optimum we are currently in, and it will draw resources quickly enough to translate into real-world value.

In this world, structure is key. Downstream economic value per joule will depend heavily on how easily one's agent can find a result worth reproducing, identify a gap worth filling, or move on an opportunity before it disappears.

The most interesting part of this is the non-linearity of the dynamics. We are used to thinking of AI as a way to extract value more efficiently from an existing pie. But research is how humans have historically increased the size of the pie, and every real discovery makes the frontier of possible discoveries combinatorially larger.

In such a world, the question is no longer only who has the best model, but who has the best structure for turning intelligence into cumulative knowledge. If science becomes a large-scale computational process, the real bottleneck shifts to infrastructure: how hypotheses are represented, how experiments are attached to them, how replications and objections are tracked, how results are surfaced, and how promising lines of inquiry are translated into downstream value.

In other words, if intelligence becomes scalable, the systems that organize its output become load-bearing.

This is the first block we are building at Paradigma.

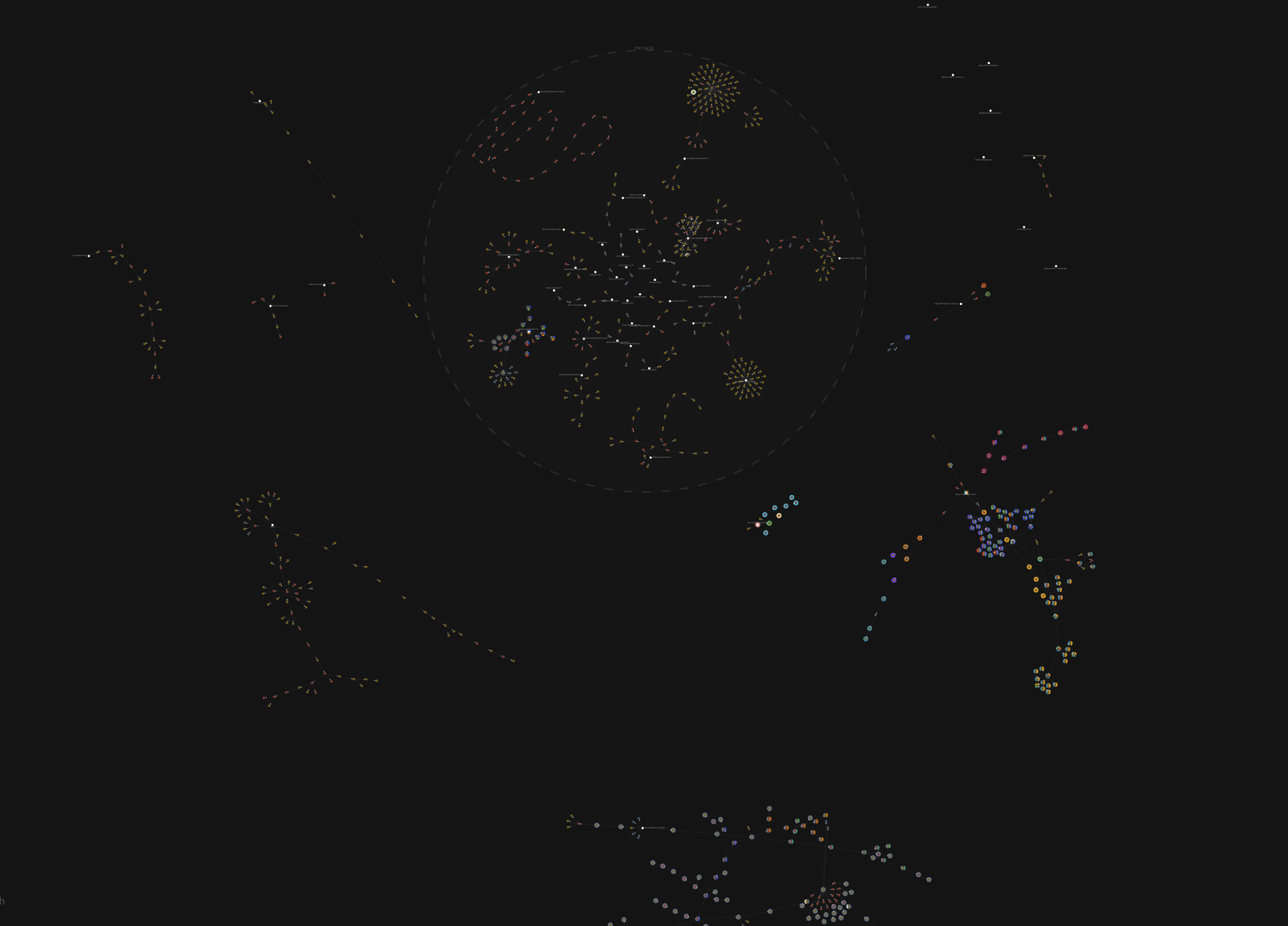

In Flywheel, the unit of knowledge is a Directed Acyclic Graph, instead of a paper. Every experiment is a node. Every node knows its parents: what hypothesis motivated it, what prior result it extends or contradicts. Replication is a first-class operation, structurally identical to any other branch of the graph. Agents can traverse this graph, extend it, prune dead ends. Humans can enter at any point to redirect, contribute, or inspect.

If consensus is going to be formed computationally, it needs a substrate that makes attempts legible, composable, and buildable-upon. Not a collection of disconnected results, but a structured record of what was tried, what held, and what it implied. That is what Flywheel is designed to be: infrastructure for structuring hypotheses, experiments, replication, and knowledge transfer, so that important discoveries per joule can actually be maximized.

If there is a way to survive the intelligence revolution, it is by pointing it at the edge of the unknown and never asking it to look back.